Part 2 of 3: Building Tech Teams in the Era of AI

A few months ago I was on a call with a founder who was genuinely proud: “We bought Claude licenses for the whole team.” Great, I said. How’s it going? Long pause. “Well… some people use it. I think.”

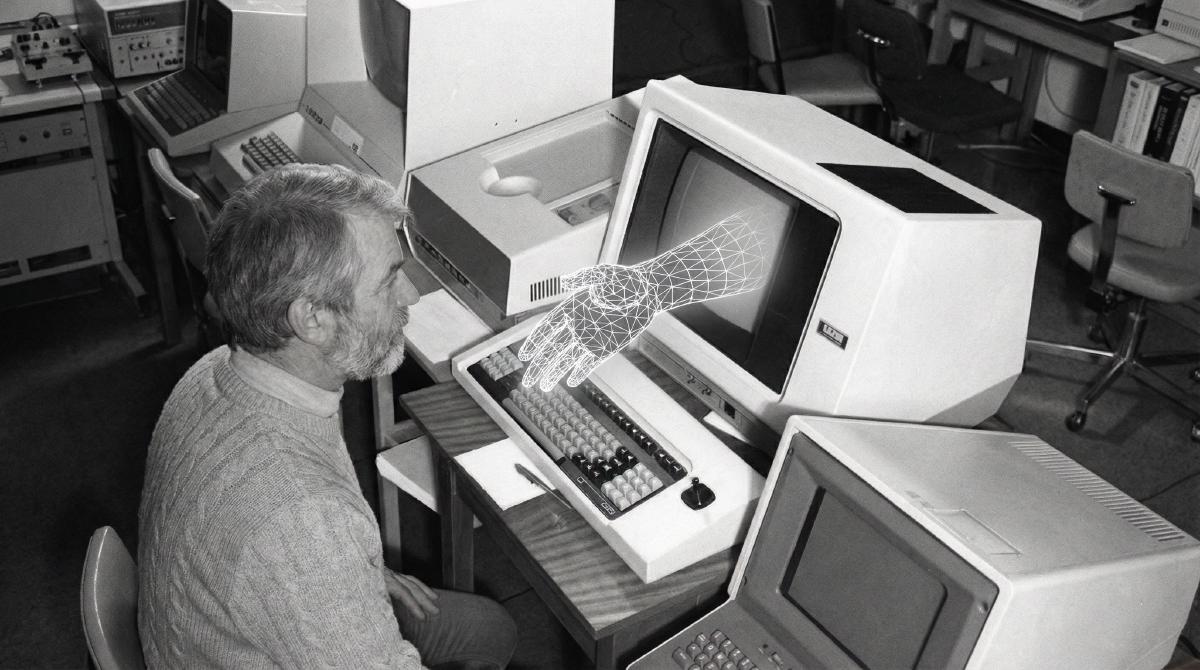

This is the state of AI adoption in most engineering teams right now. The tools are there. More than 80% of developers use or plan to use AI coding assistants. Cursor went from basically zero to a billion dollars in ARR faster than any SaaS product in history. GitHub Copilot is in 90% of Fortune 100 companies. The supply side of this equation is solved — there’s no shortage of incredibly powerful AI tools available to your engineers today.

The demand side, though? That’s where it gets messy.

Everyone’s adopting. Almost nobody’s good at it.#

Here’s a stat that messes with the popular narrative: a rigorous study by METR gave experienced open-source developers AI tools to work on their own repositories — codebases they knew intimately. Result? They were 19% slower. And the kicker — they believed they were 20% faster. Well..

This doesn’t mean AI tools are useless. Far from it. Across our team and colleagues at other companies, we saw team velocity gains ranging from 10% to 70% depending on the task. The median is probably around 40%. But that range is enormous, and the difference between 10% and 70% comes down to how you adopt, not what you adopt.

Buying licenses is the easy part. Building an AI adoption strategy that actually changes how your team works — that’s the real challenge. And almost nobody’s doing it well yet.

Champions, curious, and skeptics — you need all three#

When I talk to founders about AI adoption in their engineering teams, they usually want everyone to be enthusiasts (like themselves, jaja). “We need a team of AI champions!” Nope, you really don’t.

From what I’ve seen — and research roughly confirms this with a 30/50/20 split — your team naturally falls into three groups. About 30% are champions: they follow every AI release, they try every new tool on day one, they’re already building personal assistants on OpenRouter in their spare time. Whether or not the company has an AI policy, they’re using it anyway. They can’t resist.

Then there’s the curious middle — about 50%. These folks are pragmatic. They see AI as a tool, not a religion. They’ll use it when it helps, but they won’t chase every shiny thing just because it’s new. They’re the ones who actually build sustained adoption, taking what champions discover and turning it into repeatable practice.

And then, maybe 20%, are the skeptics. Their default position is: “What are you even talking about? I’m doing fine without this stuff.” And exactly because of this — they’re absolutely essential.

A team of all champions is chaos. Infinite experimentation, zero reflection. Somebody needs to say “this output is garbage and here’s why.” A UCI research study found something fascinating: people with a negative bias toward AI actually demonstrated greater vigilance, which led to better outcomes. They catch the errors enthusiasts miss. Another review found that AI explanations tend to increase blind trust rather than appropriate reliance — meaning the more you rely on AI, the more you need someone around who doesn’t trust it to keep you honest.

So this is a new axis of team diversity that we now have to think about. How do you compose your team so it doesn’t tilt too far in any direction? A good mix of all three types creates a structure that actually delivers — champions to explore, curious folks to operationalize, skeptics to pressure-test. Everyone matters here.

Your tech lead sets the ceiling#

Tools are abundant. Practice is lacking. And the bottleneck is almost always leadership (apart from energy supply and RAM prices, obviously).

Here’s what I mean. If your team lead or CTO doesn’t visibly use AI in their own work, the rest of the team won’t either. Atlassian’s research found that when managers demo their own AI use, teams are 4× more likely to experiment. Four times. That’s not a marginal difference — that’s the difference between adoption happening and not happening.

We don’t fully understand yet what “AI adoption leadership” looks like as a defined skill set. Nobody teaches it the way we teach project management or agile. But some things are becoming clear: the leaders you hire into engineering roles — team leads, VPs of engineering, CTOs — need to be three things when it comes to AI.

They need to be competent — actually using AI tools themselves, not just talking about them. They need to be visible about it — showing the team how they use AI in their real work, not in a polished demo. And they need to set guardrails — defining what’s acceptable use and what’s not. Why you don’t paste your entire codebase into Claude for a quick answer. Why you review AI-generated code before committing. What “responsible use” means in your specific context.

In big companies, this falls under AI governance frameworks and risk management policies. In a startup? It’s your tech lead setting the tone through their daily actions. That’s your AI adoption strategy at the leadership level: less documentation, more demonstration.

The vibe coding trap#

Now let’s talk about the elephant in the room. AI-assisted development is fast. Really, genuinely fast. Code, SQL, data structures — LLMs accelerate all of it. But the quality has taken a serious hit, and the requirements for quality have dropped too, because “the AI did it” has become a weird kind of excuse.

Veracode tested code generated by over 100 LLMs and found that 45% of it introduced security vulnerabilities aligned with the OWASP Top 10. A Cloud Security Alliance study found 62% of AI-generated solutions contain design flaws. Code churn is going up while meaningful refactoring is approaching zero.

There’s an enormous amount of vibe coding happening right now. Everyone ships an MVP in a weekend, it works, and then.. the old wisdom was “throw away the MVP, now build it properly.” Now there’s this dangerous illusion that you can just keep riding on that superficial code. Keep prompting, keep patching, keep going. An MIT professor called AI “a brand new credit card for accumulating technical debt.” I think about that quote a lot.

The problem is how most teams inject AI into their process. They take a workflow built for humans, replace one step with an AI tool, and move on. But the handoff between human and AI and back creates context gaps that nobody wants to deal with. The documentation doesn’t match. The code review isn’t calibrated for AI output. The requirements weren’t written in a way that feeds naturally into Cursor or Claude Code.

The actual idea behind AI adoption in engineering is different. You shouldn’t be injecting AI into a human process — you should be redesigning the process assuming AI is part of it from the start. How do your requirements flow into the AI tool? How does the AI’s output get documented so the next feature is buildable? How does code review work when half the code was generated?

If your engineering process is already established, this is the hardest part. You need to basically redesign it on the fly. If you’re building from scratch — lucky you, you can do it right from day one.

Either way, just handing out Claude Code licenses is not it. People need help building new workflows. That’s what enabling actually means.

What this adds up to#

Your AI adoption strategy for engineering teams comes down to three things: compose your team with the right mix of attitudes (don’t build a monoculture), make sure your leaders walk the walk (not just buy the tools), and redesign your development process to work with AI natively instead of bolting it on as an afterthought.

None of this is solved. There’s no playbook you can download. The companies figuring it out fastest are the ones treating it as continuous experimentation — trying things, measuring what changed, keeping what works, throwing away what doesn’t.

Which, if you think about it, is just good engineering.

Previously — Part 1: Your Tech Hiring Funnel Is Broken. Here’s What Works Now.

Next up — Part 3: Retaining engineers when their market value just doubled (coming soon).

This is Part 2 of a 3-part series on Building Tech Teams in the Era of AI.