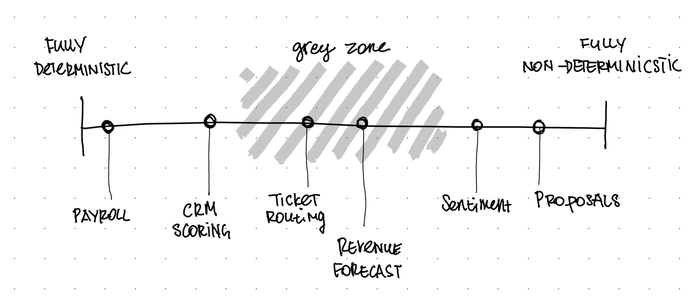

What it is: The ability to tell whether a business task needs a formula or a language model. Deterministic tasks (payroll, invoicing, compliance) have fixed rules and predictable outputs. Non-deterministic tasks (proposals, sentiment analysis, meeting summaries) depend on context and judgment — AI territory.

Why it matters: Most AI projects fail because leaders apply AI where a spreadsheet would do, or avoid it where rules can’t capture the nuance. The expensive mistakes live in the grey zone between the two.

How to build it: Classify your business tasks on a spectrum, run the consistency test, try the spreadsheet challenge, and map everything on a simple 2×2 matrix. Details and frameworks below.

I watched a founder spend four months and roughly €40,000 building an AI system to score incoming leads. The system analysed company data, cross-referenced public information, assessed email tone, and produced a score from 1 to 100.

It was impressive. It was also completely unnecessary for about 70% of what it did.

The lead scoring had two components. The first was straightforward: if the company is in our target industry, has more than 50 employees, and the contact is a decision-maker — score it high. Three fields, three conditions, a formula anyone could write in a CRM in an afternoon.

The second component was genuinely interesting: reading the actual text of the inbound message to assess buying intent, urgency, and whether this was a real enquiry or just someone downloading a whitepaper to avoid writing their own. That part? AI was perfect for it. No formula captures the difference between “we’re exploring options for next year” and “our current vendor just went down and we need someone by Friday.”

The problem is, nobody separated the two. The deterministic part — the stuff a spreadsheet handles perfectly — got mixed with the genuinely fuzzy stuff that only AI can do. The result was a system that was expensive to run, occasionally hallucinated on the simple parts, and was impossible to debug because nobody could tell which half was causing problems.

This is the single most common pattern I see in AI implementations that go sideways. Not bad technology. Not the wrong model. A fundamental confusion about which type of problem they’re actually solving.

The distinction that nobody teaches you#

There’s a concept here that sounds academic but is actually the most practical thing you’ll learn about AI this year: the difference between deterministic and non-deterministic tasks.

Deterministic means predictable. Same input, same output, every time. Payroll calculations. Tax filings. Inventory reorder points. “If order quantity drops below 50, trigger a restock.” You know every field, every formula, every rule. You can write it down. A computer has been doing this perfectly since the 1970s.

Non-deterministic — or probabilistic, or stochastic, depending on who you ask — means variable. Same input, different output, and that’s actually fine. Summarising a client meeting. Drafting a proposal. Analysing whether a customer complaint is genuinely angry or just blunt. There’s no formula for this. The answer depends on context, nuance, and judgment. This is where AI earns its keep.

Sequoia Capital’s Konstantine Buhler called this shift “the biggest change in the use of tools since the advent of the computer.” And I think he’s right — not because the technology is new, but because it requires a fundamentally different way of thinking about what you’re automating.

The old approach was: understand the process completely, define every rule, then build. If you couldn’t define the rules, you couldn’t automate it. That’s why entire consulting industries exist to “map processes” before implementing CRM or ERP systems.

AI flips this. You can throw it at a problem you haven’t fully decomposed, and it’ll still produce something useful. Which is both its superpower and its biggest trap.

The reality isn’t binary. Every business task sits somewhere on a spectrum, and the interesting ones — the ones where money gets wasted — live in the grey zone.

Payroll is about as deterministic as it gets — same formula, same answer, always. Proposal writing is fully non-deterministic — creative, contextual, different every time. But ticket routing? That depends on whether you’re matching keywords (deterministic) or trying to understand what the customer actually needs (non-deterministic). Revenue forecasting? Formula-based on pipeline data, or AI-driven from market signals? The tasks you argue about are the ones worth thinking hardest about.

Where this goes wrong#

The trap is subtle. Because AI can handle fuzzy tasks, people start using it for everything — including tasks that are perfectly deterministic. And that’s where money disappears.

I see this constantly. A company asks ChatGPT to calculate quarterly revenue by region. ChatGPT does it — sometimes correctly, sometimes not. Meanwhile, a pivot table in Excel would do it instantly, for free, with 100% accuracy, every time. They’re paying for AI tokens to do arithmetic.

Or worse: they build an AI workflow that mixes both types. Part of the process needs exact, repeatable results (pulling data from a database, applying a discount formula, formatting an invoice). Part of it genuinely needs AI (personalising a cover letter for the invoice, deciding which upsell to suggest based on the client’s recent communication). When these get tangled together, you get a system that’s expensive, unreliable, and nobody can explain why.

The reverse mistake exists too, though it’s less common now. Some leaders avoid AI entirely for tasks where it would be dramatically better than rules — analysing free-text customer feedback, prioritising support tickets by actual urgency rather than keyword matching, drafting first versions of anything creative. They insist on rules-based approaches because rules feel safe and predictable. And they are — they’re also limited to problems where you can write down every possible scenario in advance.

Google’s former Chief Decision Scientist, Cassie Kozyrkov, put it simply: if you can write down the rules, use traditional programming. If you can’t articulate the recipe, that’s where machine learning belongs. The litmus test sounds easy, but applying it honestly to your own business is harder than it looks.

Why this is harder than it sounds#

Part of the difficulty is psychological. We’ve spent decades working with deterministic software. Excel, CRMs, ERPs — every tool we’ve ever used before AI gave us the same answer every time. When you ask a spreadsheet to calculate something, it doesn’t “kind of” get it right. It’s exact. Always.

So when AI gives you a different summary of the same meeting transcript depending on when you run it, your instinct says “this is broken.” It’s not broken. That’s how probabilistic systems work. The variability is a feature for fuzzy tasks and a flaw for precise ones. The skill is knowing which you’re dealing with.

Then there’s the anthropomorphisation problem. Because AI talks to you in natural language, because it feels like a conversation, because you can ask it follow-up questions — people start treating it like a smart colleague rather than a probability engine. And when you treat it like a colleague, you trust it the way you’d trust a colleague: implicitly, for everything, without checking the type of task you’ve given it.

Research from Oxford found a genuine Dunning-Kruger pattern here: people with moderate AI knowledge are the most susceptible to over-trusting it. Not beginners — they’re cautious. Not experts — they know where it breaks. The dangerous zone is “knows enough to be confident, not enough to be careful.”

And then there’s plain old FOMO. The pressure to “do something with AI” before you’ve figured out what problems you actually have. A survey of over 800 business leaders found the recurring pattern: money is there, buy-in is there, but nobody has defined the problem they’re solving. They pick a tool before picking a problem, and they pick AI for tasks that a conditional formula would handle better.

How to build this instinct#

I’m not going to give you a day-by-day training programme because, honestly, this isn’t that kind of skill. It’s more like developing taste — you build it by paying attention, making some mistakes, and reflecting on what went wrong. But there are a few things that accelerate the process.

Start by classifying what you already do. Pick 10 or 15 of your company’s recurring business tasks. For each one, ask: could I write an exact formula for this? If the answer is yes — if you could explain the logic to a new intern and they’d get the same result every time — it’s deterministic. If the answer is “it depends” or “you need judgment” or “sometimes the right answer isn’t obvious” — it’s non-deterministic. The tasks that generate debate are the interesting ones. That grey zone is where the real decisions live.

Run the consistency test. Take a task you’re currently using AI for. Run it three times, in three separate conversations, with the same input. If the results vary significantly, the task is genuinely non-deterministic and AI is a reasonable fit. If the results should be identical but they’re not — if you’re getting slightly different revenue numbers each time, for example — you’ve got a deterministic task in the wrong tool.

Try the spreadsheet challenge. Take one thing you use AI for this week. Try doing it in Excel or Google Sheets instead. If the spreadsheet version works — same accuracy, same speed, no judgment required — you’re paying for AI you don’t need. I’ve done this exercise with clients and seen entire “AI initiatives” collapse into a couple of well-written formulas. That’s not a failure. That’s saving money.

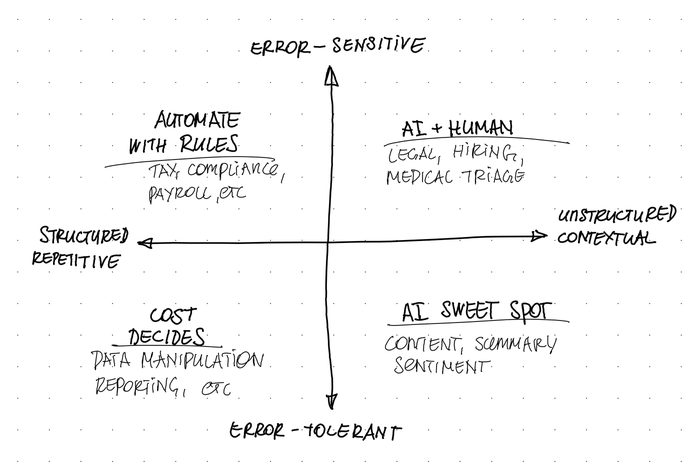

Think in quadrants. Once you’ve classified a handful of tasks, place them on two axes: how structured and repetitive is the input, and how sensitive to errors is the output? This gives you four zones, each with a different answer.

The bottom-right — unstructured input, tolerant to variation — is where AI delivers the easiest wins. Content drafts, brainstorming, summarisation, sentiment analysis. Let it run, review the output, move on.

The top-left — structured input, error-sensitive — is where AI is actively wasteful. Tax calculations, compliance reporting, payroll. Write the formula. Don’t pay for tokens to do arithmetic.

The top-right is where it gets interesting: unstructured and error-sensitive. Hiring screening, legal review, client risk assessment. AI can do the heavy lifting, but a human needs to verify before anything goes live. This is the domain of human-in-the-loop patterns — AI drafts, human decides.

And the bottom-left — structured but low-stakes — is where cost decides. Either approach works. At 10 tasks a day, a formula is simpler. At 10,000 a day, AI might be cheaper even for repetitive work. Do the maths for your volume.

The point of all these exercises isn’t to produce a definitive classification. Every business is different, and the boundary between deterministic and non-deterministic shifts as your data improves, as tools evolve, and as your team builds experience. The point is to develop the reflex of asking the question before you start spending.

The conscious choice#

Here’s the nuance I want to land on, because I think it’s the part that gets lost in most “AI strategy” advice.

Using AI for a deterministic task isn’t always wrong. Sometimes you throw ChatGPT at something you could write a formula for, simply because you don’t want to spend the time defining the rules. I do this. Everyone does this. The annual report that needs 12 specific numbers pulled from 4 spreadsheets — could I write the formulas? Sure. Do I sometimes just paste the data into Claude and say “give me the summary”? Absolutely.

The difference is doing it consciously. Knowing that you’re making a trade-off — paying a bit more, accepting a small error risk, saving yourself the effort of formalising the rules. That’s a legitimate business decision.

What’s not legitimate is doing it by default. Not knowing which type of problem you’re dealing with. Not asking the question. Treating every business task as equally suited to AI because “AI can do anything now.”

It can do a lot. But the things it does well and the things a formula does well are different categories, and the leader who can tell them apart will spend less, fail less, and build systems that actually work.

Andrew Ng demonstrated something that stuck with me: he took GPT-3.5 — the older, cheaper model — wrapped it in a well-designed workflow with checks and iterations, and it outperformed GPT-4 used naively. The weaker model with good process design beat the stronger model with no process design, 95% to 67% on a coding benchmark.

That’s the whole argument in one data point. It’s not about which AI you buy. It’s about whether you understood the problem before you bought it.